Healthcare mein WhatsApp chatbot — yeh topic aate hi do types of reactions aate hain.

Type 1: “Wow, this could solve so many operational problems.” (Correct instinct.)

Type 2: “But wait — can we even do this in healthcare? Is it legal? What if the bot says something wrong and a patient acts on it?” (Also correct instinct — and critically important.)

Both reactions are valid. And both need to be addressed before any hospital, clinic, or healthcare provider builds a WhatsApp healthcare chatbot.

Because I’ve seen healthcare chatbot implementations done right — transforming patient experience, reducing staff workload, improving operational efficiency. And I’ve seen them done wrong — creating confusion, legal exposure, and patient harm risk.

The difference isn’t the technology. It’s understanding where the chatbot belongs in the patient journey and where the human must take over.

After 4+ years in WhatsApp automation and 15+ years in IT — I can tell you this with confidence: WhatsApp healthcare chatbots work brilliantly when deployed for the right use cases. And they become liabilities when deployed beyond those boundaries.

Let me draw the map clearly.

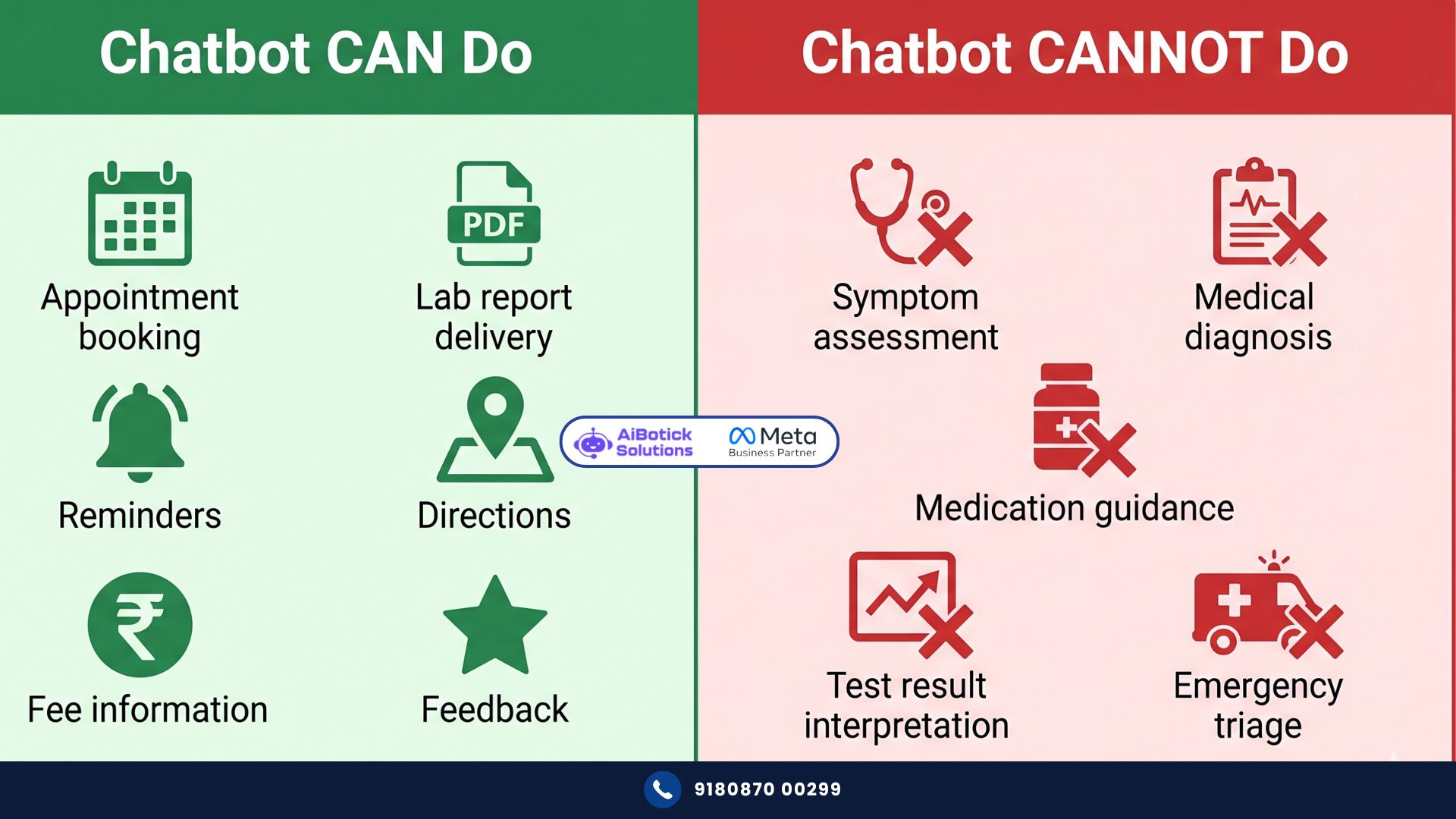

The Core Principle — Administrative vs Clinical

Before any specific use case, any technical detail, any implementation question — this one principle must be understood.

A WhatsApp healthcare chatbot is an administrative assistant. Never a clinical one.

Administrative: appointment booking, reminders, report delivery, directions, fee information, department routing, visiting hours.

Clinical: diagnosis, symptom interpretation, treatment recommendations, medication dosage guidance, test result interpretation, prognosis.

The first category — bot does brilliantly. The second category — bot must never touch. Not even tangentially.

“Which doctor should I see for my knee pain?” — this looks like a routing question but is actually a clinical question. Knee pain could be orthopedic, rheumatology, physiotherapy, or neurology depending on the specific presentation. A bot routing to “orthopedics” without understanding the clinical picture could delay appropriate care.

The boundary matters. And it’s not always as obvious as “is this medical advice?” Always err on the side of caution.

What a WhatsApp Healthcare Chatbot CAN Do — The Full Permitted List

Let me be thorough. Because the permitted zone is genuinely large and valuable.

Appointment Management

- New appointment booking (department selection, slot selection, patient details)

- Existing appointment confirmation, rescheduling, cancellation

- Queue management updates (“Dr. [Name] is running 30 minutes late”)

- Multi-doctor scheduling for the same patient on different days

- Priority appointment flagging (patient indicates urgency — routes to human for clinical triage)

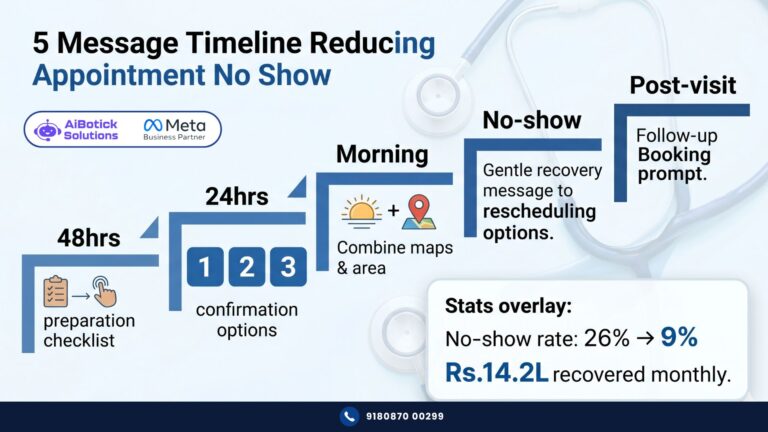

- Appointment reminders at 48 hours, 24 hours, and morning-of

- No-show recovery — rescheduling offers to patients who missed appointments

All covered in detail in our WhatsApp appointment booking guide for clinics and hospitals.

Patient Information Delivery

- Clinic/hospital timings and holiday schedule

- Doctor profiles and availability

- Fee structure by department and procedure

- Insurance panels and accepted insurance providers

- Directions, parking instructions, public transport options

- Preparation instructions for specific procedures (fasting requirements, what to bring)

- Visiting hours for IPD patients

- Contact numbers for different departments

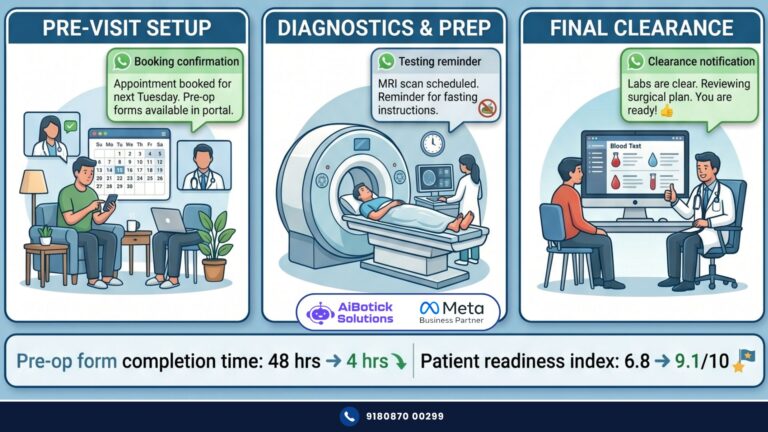

Post-Appointment Support

- Discharge summary delivery (PDF)

- Prescription delivery (PDF — already prescribed by doctor)

- Lab report delivery (PDF — already generated by lab)

- Medication reminder schedules (not dosage guidance — just reminders for medications already prescribed)

- Follow-up appointment reminders

- Post-discharge warning signs (as specified by doctor, delivered verbatim)

Patient Feedback and Reviews

- Star rating collection after consultations

- Positive review routing to Google

- Complaint capture and escalation to patient relations

Administrative Queries

- Bill and payment queries routing

- Insurance claim status routing

- Health package information

- Health check-up package details

- Corporate tie-up information

This is a substantial list. A WhatsApp healthcare chatbot covering all of these — it handles 70-80% of all inbound queries to most clinics and hospitals. Without clinical risk. Without regulatory exposure.

What a WhatsApp Healthcare Chatbot CANNOT Do — The Hard Lines

These are non-negotiable. No exceptions. No “but in this specific case.”

Symptom Assessment or Triage

Example of what NOT to build: “What are your symptoms? → Patient: chest pain and shortness of breath → Bot: This could be cardiac or pulmonary. I’ll route you to our cardiologist.”

This is clinical triage. The bot is making a clinical judgment based on symptoms. This is practicing medicine without a license — regardless of how the bot is framed.

The right flow: “Please describe your concern” → Bot says: “For any medical concern, please call us at [Number] so our staff can properly assess your need. For emergency symptoms — call 108 or visit the nearest emergency room immediately.”

The rule: Symptoms in → human out. Always. Zero bot involvement in symptom assessment.

Medication Guidance

What NOT to build:

- “Can I take this medication with food?” → bot answers

- “What is the dosage for my child?” → bot calculates

- “I forgot my dose — what should I do?” → bot advises

These are clinical questions. Even straightforward-seeming ones. “Can I take paracetamol with my current medication?” — the bot doesn’t know the patient’s full medication list, their conditions, their allergies.

The rule: Any question about medication — even seemingly simple ones — must go to a human or be explicitly referred to the prescribing doctor.

Test Result Interpretation

Lab report delivered via WhatsApp — great. WhatsApp healthcare chatbot saying “Your haemoglobin is 9.2 which is below normal — this indicates anaemia” — absolutely not.

The report is information. Interpretation is clinical. The bot delivers. The doctor interprets. Full stop.

The rule: “Here is your report” — acceptable. “Your report shows…” beyond factual delivery — not acceptable.

Emergency Management

This one I feel strongly about. Because I’ve seen healthcare chatbots that say things like: “For emergencies, we have a 24-hour helpline.” That’s fine. What’s not fine is any delay or friction in the emergency path.

A WhatsApp healthcare chatbot must have an emergency detection layer. Keywords like “chest pain,” “can’t breathe,” “unconscious,” “bleeding heavily,” “accident,” “stroke” — these must trigger an IMMEDIATE response: “This sounds like a medical emergency. Call 108 now or go to the nearest emergency room immediately. Do not wait.”

And then — optionally — share your hospital’s emergency contact if they can reach you quickly.

The emergency detection and bypass of all normal flows — this is not optional. It’s a patient safety requirement.

Mental Health Content

This deserves special mention. Mental health is an increasingly common patient concern — and patients sometimes share distressing content in WhatsApp healthcare chatbot conversations.

“I’ve been feeling very low and don’t want to continue” — this is a potential mental health crisis. No chatbot should engage with this content beyond immediate crisis referral.

Build explicit detection for mental health distress language. Any such message → immediate: “I can hear that you’re going through a difficult time. Please call iCall (9152987821) or Vandrevala Foundation (1860-2662-345) right now. Our clinical team is also available at [number]. You’re not alone.”

And flag this conversation for immediate human review.

Building a WhatsApp Healthcare Chatbot That Passes the Compliance Test

Now that boundaries are clear — let me show you how to build within them effectively.

The Safety Architecture

Every WhatsApp healthcare chatbot in healthcare needs four safety layers built in from Day 1:

Layer 1 — Emergency Keyword Detection: Build a keyword list (minimum 25-30 words): emergency, chest pain, can’t breathe, unconscious, accident, bleeding, stroke, paralysis, seizure, pregnant, labour, water broke, baby not moving, etc.

Any of these keywords in any message — immediate emergency response, bypass all other flows, human alert fired simultaneously.

Layer 2 — Clinical Question Deflection: Build a separate keyword list for clinical content: diagnosis, symptoms, medication, dosage, side effects, test results mean, treatment, which medicine, should I take, etc.

These trigger: “For clinical questions, please speak with our medical team at [Number] or book an appointment with Dr. [Name].”

Never attempt to answer. Always deflect to human.

Layer 3 — Disclaimer in Chatbot Introduction: First message in any WhatsApp healthcare chatbot flow should include: “Note: This chatbot assists with appointments, reports, and information. For medical advice — please consult our doctors.”

One sentence. Legally protective. Expectation-setting.

Layer 4 — Human Escalation Always Available: At every stage of the chatbot flow — patient can type “SPEAK TO SOMEONE” or “HUMAN” and be immediately routed to a human agent. No flow so deep that a patient can’t find their way to a person.

The Bot Architecture — Flow Design

Your WhatsApp healthcare chatbot structure should follow a simple hierarchy:

Level 1 — Main Menu: Appointment / Reports / Information / Feedback / Speak to Team

Level 2 — Sub-menus under each: Appointment → New / Existing / Rescheduling Reports → Lab Reports / Prescriptions / Discharge Summary Information → Timings / Fees / Doctors / Directions / Visiting Hours

Level 3 — Action: Each sub-menu leads to a specific action — booking, delivery, information. All administrative. All within permitted boundaries.

Escalation exits at every level: At every menu — the option to speak to a human agent exists. Always.

Real Numbers — Pune Multispeciality Hospital (WhatsApp Healthcare Chatbot Implementation)

Specific. Documented. Real.

500-bed multispeciality hospital. Pune. Implementing WhatsApp healthcare chatbot across OPD appointment management, lab report delivery, patient reminders, and general information.

Before WhatsApp healthcare chatbot:

- Daily inbound queries to reception: 280-320 calls + 180-200 walk-ins for administrative queries

- Staff time on administrative queries: estimated 40% of front desk capacity daily

- After-hours query capture rate: near zero (no system)

- Patient experience rating on administrative processes: 2.9/5

After 90 days with WhatsApp healthcare chatbot:

- Administrative queries handled by bot: 67% of total

- Remaining 33% (complex cases, actual clinical queries, complaints): handled by humans

- After-hours query capture rate: 94% (bot runs 24×7)

- Staff time on administrative queries: reduced to 18% of front desk capacity (freed 22% for patient-facing service)

- Patient experience rating on administrative processes: 4.3/5

And — critically — zero incidents of clinical harm, regulatory complaint, or patient complaint about the bot’s medical conduct.

Zero. In 90 days. Across 280+ daily interactions.

Because the boundaries were built right. Emergency detection was functioning. Clinical questions were deflected consistently. Human escalation was always accessible.

The WhatsApp healthcare chatbot didn’t try to be a doctor. It was an excellent administrative assistant. And in that role — it performed brilliantly.

Financial impact:

Staff time freed: 22% of front desk capacity × 4 front desk staff × 8 hours × Rs.150/hour × 26 days = approximately Rs.27,456/month.

After-hours queries captured: 94% vs near zero previously. Estimated 45-50 additional appointment bookings monthly from after-hours capture. At Rs.1,500 average appointment value = Rs.67,500-75,000/month.

Patient experience improvement driving new patient referrals: conservative 20 additional new patients monthly. At Rs.2,000 average per new patient OPD = Rs.40,000/month.

Total monthly impact: approximately Rs.1,35,000-1,40,000/month.

Platform cost: Rs.60,000/year = Rs.5,000/month.

27-28x monthly ROI.

What Healthcare Providers Get Wrong About WhatsApp Healthcare Chatbots

One mistake. And it comes from a good place — wanting to be maximally helpful to patients.

They try to make the chatbot too smart about health.

“If the patient types ‘fever’ — let’s give them some home remedies while they wait for their appointment.”

Sounds helpful. Is actually dangerous territory. Depending on the context — that “fever” could be a malaria presentation, a dengue case, a post-surgical complication, or simply a mild viral. Home remedy advice for any of these varies wildly.

The instinct to help patients more through the bot is understandable. The implementation of that instinct through clinical content is where things go wrong.

Helpful outside clinical boundaries: “We’ve booked your appointment with Dr. [Name] for tomorrow at 10am. Please stay well hydrated. See you then! 😊”

Helpful inside clinical boundaries — which bots should not do: “For your fever — take paracetamol every 6 hours and rest. If it’s above 103°F for more than 3 days, come to our OPD.”

Both sound helpful. Only the first one is appropriate for a WhatsApp healthcare chatbot to say.

Actually wait — I want to address one more thing specifically. The “AI-powered diagnosis” chatbot trend. There are products being marketed to healthcare providers that use AI to assess symptoms and suggest diagnoses via chatbot. Some are even marketed as “pre-consultation triage” tools.

Honestly? I think this space is significantly overhyped for the Indian market right now. Not because AI can’t be powerful in healthcare — it can be. But because the regulatory environment, the liability landscape, and the patient safety requirements in India make this a very high-risk deployment for any hospital or clinic.

A WhatsApp healthcare chatbot that does appointment booking and report delivery — that’s a well-understood, well-bounded use case with clear value and manageable risk.

A WhatsApp chatbot that attempts symptom triage or preliminary diagnosis — you’re in regulatory grey territory that could expose the healthcare provider to significant liability if anything goes wrong.

Stick to what’s clearly safe and clearly valuable. That’s the advice of 4+ years of watching this space.

For how the WhatsApp healthcare chatbot connects to the complete patient communication system — our WhatsApp for hospitals guide shows how appointment booking, lab reports, reminders, and feedback all work together.

Setting Up a WhatsApp Healthcare Chatbot — Exactly What to Do

Step 1 — Map all administrative queries your front desk handles

Over one week — have a staff member log every inbound call and walk-in query. Categorise: appointment booking, report query, directions, fee query, clinical question, complaint, other. This gives you the actual distribution of your query types.

Typical finding: 65-75% are pure administrative. These are chatbot-appropriate.

Step 2 — Build emergency detection first

Before any other flow — build the emergency keyword list and the immediate escalation response. Test it. Test it again. Show it to your medical director for sign-off on the keyword list and the response language.

This is the safety foundation. Everything else builds on top of it.

Step 3 — Build clinical query deflection

Second priority. Build the keyword/intent list for clinical questions. Test the deflection responses. Ensure they’re warm, not robotic. “I’m not able to help with medical questions — please call our team at [Number] or book with Dr. [Name]” — human-sounding, helpful, clear.

Step 4 — Build the administrative flows

Following the hierarchy: main menu → sub-menus → actions. Each action connects to your actual systems — appointment software, LIS for reports, HMS for patient records.

Step 5 — Build human escalation at every level

Every menu has a “Speak to our team” option. It works. It routes to a human immediately. Not to another bot menu. Not to a hold message. A human.

Step 6 — Legal review of bot flows

Before going live — have your hospital’s legal or compliance team review the bot’s response language. Especially the clinical deflection messages and the disclaimer. This protects you and ensures you’re within regulatory boundaries.

Step 7 — Pilot with 100 patients

Run for 2 weeks with limited deployment. Review every conversation. Look for: clinical questions that slipped through deflection, emergency situations that were handled correctly, confusing flows, high drop-off points. Fix before full deployment.

For how to calculate the complete financial impact of WhatsApp healthcare chatbot implementation on your specific setup — our WhatsApp automation ROI guide has the healthcare-specific framework.

Toh healthcare providers se ek direct message:

WhatsApp healthcare chatbot — yeh ek powerful tool hai. But only when you respect what it can and cannot do.

Administrative assistant — excellent. Available 24×7. Patient experience improver. Staff time saver.

Clinical assistant — never. Not now. Not with current technology and regulatory clarity in India.

Build it right — with clear boundaries, safety architecture, and human escalation — and it becomes one of the highest-ROI investments your clinic or hospital makes this year.

Build it wrong — by trying to make it do too much — and it becomes a liability.

The choice of which path to take is entirely in the design. And good design is what we do.

Tap below. 👇 Tell us your hospital or clinic type, daily inbound query volume, and current front desk capacity — we’ll design your WhatsApp healthcare chatbot within safe, compliant boundaries and show you the operational impact for your specific setup.

— Mohit Shah | 15+ years in IT industry | 4+ years in WhatsApp automation | Now helping businesses figure out what actually works

Q1: What can a WhatsApp healthcare chatbot legally do for Indian hospitals and clinics?

A1: A WhatsApp healthcare chatbot can handle all administrative functions — appointment booking and management, patient reminders (48-hour, 24-hour, morning-of), lab report and prescription PDF delivery, department and doctor information, fee queries, directions and parking, visiting hours, post-discharge medication reminders (not dosage guidance), patient feedback collection, and insurance information routing. These administrative use cases cover 65-75% of all inbound queries at most Indian healthcare providers and deliver substantial operational value without clinical or regulatory risk.

Q2: What should a WhatsApp healthcare chatbot never do in India?

A2: A WhatsApp healthcare chatbot must never perform symptom assessment, clinical triage, diagnosis, medication dosage guidance, or test result interpretation. It must never delay emergency response — any emergency keywords (chest pain, unconscious, can’t breathe, etc.) must trigger an immediate response directing patients to call 108 or visit the nearest ER. Mental health distress language must route to crisis helplines immediately. Any clinical question must be deflected to human healthcare professionals. Building a chatbot that attempts these clinical functions exposes healthcare providers to significant liability and patient safety risk.

Q3: What safety architecture does a WhatsApp healthcare chatbot require for healthcare compliance in India?

A3: A compliant WhatsApp healthcare chatbot requires four mandatory safety layers — emergency keyword detection (25-30+ keywords that bypass all flows and trigger immediate emergency response), clinical question deflection (routing all medical questions to human staff), a disclaimer in the opening message clarifying the bot’s administrative-only role, and human escalation available at every stage of every flow. Before deployment, clinical flows should be reviewed by the hospital’s medical and legal teams, and a 2-week pilot with conversation review should precede full deployment to catch any clinical content that slipped through deflection layers.